Mastering NLP From Foundations to Agents - Second Edition, the Qlib Project | Issue 80

A weekly curated update on data science and engineering topics and resources.

This week’s agenda:

Open Source of the Week - the Qlib project

New learning resources - Deep learning and GenAI courses, a prompt engineering crash course, and OpenClaw optimization

Book of the week - Mastering NLP From Foundations to Agents - Second Edition by Lior Gazit and Meysam Ghaffari.

📌 I share daily updates on TikTok, Telegram, and WhatsApp.

Are you interested in learning how to set up automation using GitHub Actions? If so, please check out my course on LinkedIn Learning:

Open Source of the Week

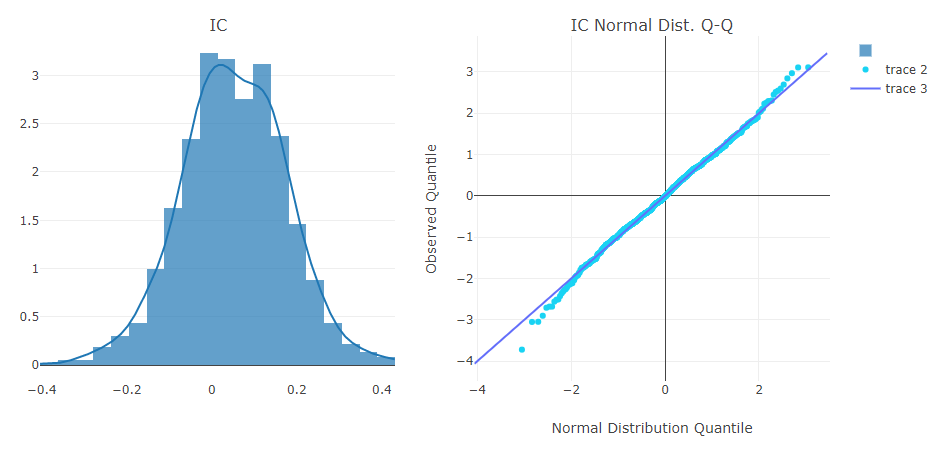

This week’s focus is on the Qlib project - an open source platform from Microsoft designed to support end-to-end quantitative investment research using AI and machine learning. It provides a full pipeline—from data ingestion and feature engineering to model training, backtesting, and portfolio optimization—allowing researchers and practitioners to develop, evaluate, and deploy trading strategies in a unified environment. By combining scalable data infrastructure with support for multiple ML paradigms (including supervised learning and reinforcement learning), Qlib addresses the complexity of financial markets and enables reproducible, data-driven experimentation in quantitative finance.

Project repo: https://github.com/microsoft/qlib

Key Features

Covers the full quant workflow, including data processing, modeling, backtesting, and execution

Supports multiple learning paradigms such as supervised learning, reinforcement learning, and market dynamics modeling

Provides a modular architecture with loosely coupled components that can be used independently or end-to-end

Includes a rich model zoo with implementations of common ML and deep learning models (e.g., LightGBM, LSTM, Transformers)

Enables automated research workflows (e.g.,

qrun) for training, evaluation, and reportingOffers a high-performance data infrastructure optimized for large-scale financial time series

Supports advanced tasks across the investment lifecycle, including alpha generation, risk modeling, portfolio optimization, and order execution

Extensible with tools like RD-Agent for automated factor mining and model optimization

More details are available in the project documentation.

License: MIT

New Learning Resources

Here are some new learning resources that I came across this week.

Deep Learning Course

The following course by edureka provides an introduction to Deep Learning. This 10-hour course covers topics such as:

Introduction

Math for machine learning

Deep learning with Python

Neural network

Convolutional neural network (CNN)

Recurrent neural network (RNN)

LSTM

Transformers

Generative AI Course

The following course by Intellipaat provides an in-depth introduction to Generative AI. This 11-hour course covers the following topics:

Introduction to Gen-AI

RNN

Gradient algorithm

Encoder and decoder algorithms

RNN and LSTM

Tokenization and padding

Hyperparameter tuning

Seq2Seq model

Prompt Engineering Crash Course

This new tutorial by Tech with Tim introduces prompt engineering. This includes the top techniques and methods, and advanced strategies to get the most out of loops.

Optimize OpenClaw

The following tutorial by Tech with Tim focuses on optimization methods to reduce token usage. This includes caching and context-pruning methods, as well as audit token usage.

Book of the Week

This week’s focus is on a new NLP book - Mastering NLP From Foundations to Agents - Second Edition by Lior Gazit and Meysam Ghaffari. This book offers a comprehensive, end-to-end journey through natural language processing — from core mathematical and machine learning foundations to modern deep learning techniques and large language models (LLMs). It combines theory with hands-on Python examples, enabling readers to build, evaluate, and deploy NLP systems while understanding how today’s LLM-powered applications work under the hood.

Topics covered in the book

Mathematical foundations for NLP — linear algebra, probability, statistics, and optimization as the backbone of ML/NLP models

Machine learning for text data — feature engineering, model training, evaluation, and handling real-world data challenges

Text preprocessing pipelines — tokenization, normalization, NER, POS tagging, and preparing text for modeling

Classical NLP tasks — text classification and traditional ML approaches for language understanding

Deep learning for NLP — neural networks, transformers, and modern architectures like BERT and GPT

Large Language Models (LLMs) — theory, training (including RLHF), capabilities, limitations, and real-world usage

Building LLM applications — prompt engineering, retrieval-augmented generation (RAG), and frameworks like LangChain

Advanced applications and trends — multi-agent systems, system design, and the future impact of NLP and AI

This book is ideal for data scientists, machine learning engineers, and developers with basic Python and ML knowledge who want to deepen their understanding of NLP — from foundational concepts to building modern LLM-powered applications in practice.

The book is available online on the publisher’s website, and a hard copy can be purchased on Amazon.

Have any questions? Please comment below!

See you next Saturday!

Thanks,

Rami